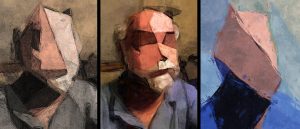

Bringing together an interdisciplinary team from journalism, computer science, cognitive science, fine art and anthropology, we have researched and created a wholly new technique to anonymize interview subjects and scenes in regular and 360 video. Our goal was to create a working technique that would be much better at conveying emotional and knowledge information than current anonymization techniques. We first researched the best techniques used today — which generally are able to pixelate the face using facial awareness (a moving area that senses the face and pixelates only that area). Based on our expertise and research we have now created our update (currently in Version 3 or v.3) of our new technique using an AI painterly approach to anonymization. When painting your portrait, artists attempt to convey the sitter’s outer and inner resemblance (outer how they look, inner who they are).

Our research work has been to use more than 100 years of artist technique but to lower outer resemblance (what someone outwardly looks like) while keep as high as possible a subjects inner resemblance (what they are conveying, how they are feeling). Our v.3 system uses several levels of AI processing to simulate a smart painter using art abstraction techniques to repaint the regular or 360 video as if an artist was painting every frame. It should be noted that our multistage AI art process is not an image processing process — it does not change the pixels of the frames of the source regular or 360 video (as you might do in an Adobe product). Instead, it specially paints a painting style result of every frame of the video. It is an open and dynamic process, allowing levels of control throughout. It is our eventual goal that either the interviewee or documentary producer can use options in the system to customize the final result based on their wants and needs.

We have completed our first two phases of the project, the background research and preliminary experiment into whether AI anonymized video retains emotional resonance and is better than traditionally anonymized footage. For the experiment we have tested the AI on sample footage, chosen our test narratives, and filmed actors performing our test scenes. Based on the results of our pre-study mentioned in the previous section, we will recalibrate our AI filter and anonymizing method and are partnering with a journalistic organization for our field trial.

After demonstrating a the need for this tool through background research, we have moved forward on testing and preparation of the tool for field trials. Though AI development we have shown the capability of the filter to render 360 footage. Through our preliminary experiments we have shown the ability of the AI tool to anonymize the identity of subjects while retaining an emotional connection.

We have also placed considerable effort toward making the AI anonymization process irreversible, ensuring the integrity of the tool against reverse-engineering to reconstruct the source footage. This is accomplished in part by adding an editorial decision-maker to the first step of the anonymization process. An editor or someone involved with producing the source footage is able to manually manipulate the image of the interviewee to alter any outstanding identifiable characteristics that person may have (ie: jawline, nose, etc.) before the AI takes over to paint the rest of the abstraction.

Categories: About, Journalism, Research